Sixfold Content

Sixfold News

Sixfold Partners with Adnovum

Sixfold is teaming up with Adnovum, the Swiss technology and consulting company specializing in secure digital transformation for the insurance industry.

.png)

Stay informed, gain insights, and elevate your understanding of AI's role in the insurance industry with our comprehensive collection of articles, guides, and more.

What is MCP - and Why It Matters for Underwriting

At Sixfold, we always integrate the latest AI advancements, but only when they truly help make underwriting faster, easier, and more accurate. One of the most promising technologies we’re exploring right now is Model Context Protocol, or MCP.

At Sixfold, we always integrate the latest AI advancements, but only when they truly help make underwriting faster, easier, and more accurate. One of the most promising technologies we’re exploring right now is Model Context Protocol, or MCP. Curious why? Read on.

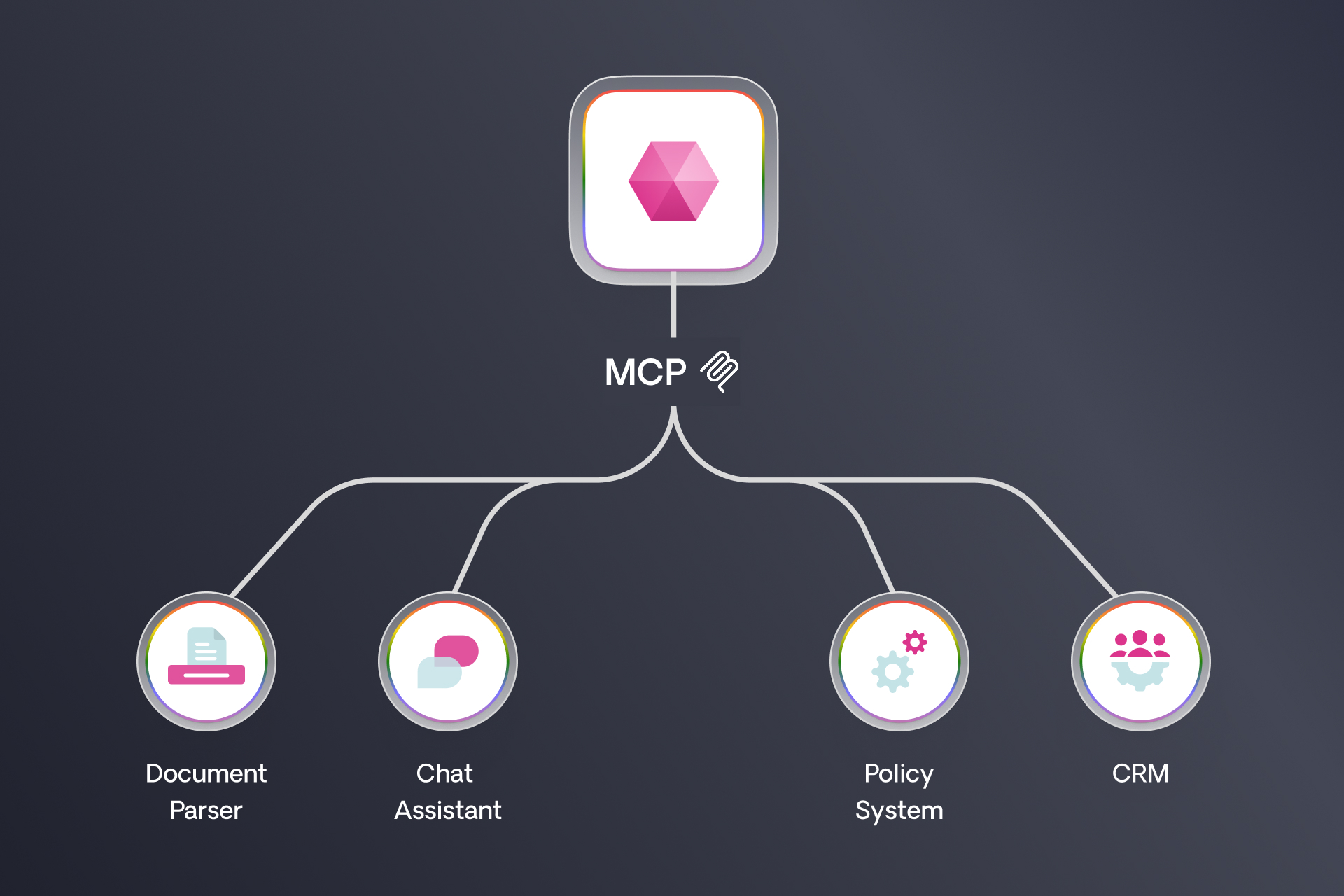

What Is MCP?

MCP is a way for different AI models, and the agents (read about agents here) that use them, to talk to each other.

Think of it like this: instead of manually connecting different systems when you want to share data, MCP lets one AI model pull context from another in real time, seamlessly and instantly.

Basically, instead of teaching every AI everything, you teach each one what it’s best at, and they learn to ask each other for help.

Who’s Behind It?

Since its introduction, MCP has gained traction among major AI providers:

Anthropic: The creator of MCP in November 2024, Anthropic has integrated the protocol into its Claude family of language models, enabling them to interact seamlessly with external systems.

OpenAI: In March 2025, OpenAI announced support for MCP across its Agents SDK and ChatGPT desktop applications, facilitating broader adoption of the protocol.

Google DeepMind: Shortly after OpenAI's announcement, Google DeepMind confirmed MCP support in its upcoming Gemini models and related infrastructure, highlighting the protocol's growing industry acceptance.

...and many more! It’s not just the AI giants. Tools like Linear, Zapier and Atlassian are jumping on board. Signaling that MCP is becoming foundational infrastructure, not just something for the leading LLMs, but for the everyday tools teams use to get work done.

MCP + Underwriting AI?

Why is MCP relevant for AI underwriting technologies? Today, many insurers have their own internal AI tools. MCP basically turns all these isolated AI models into a connected ecosystem.

Here’s an example:

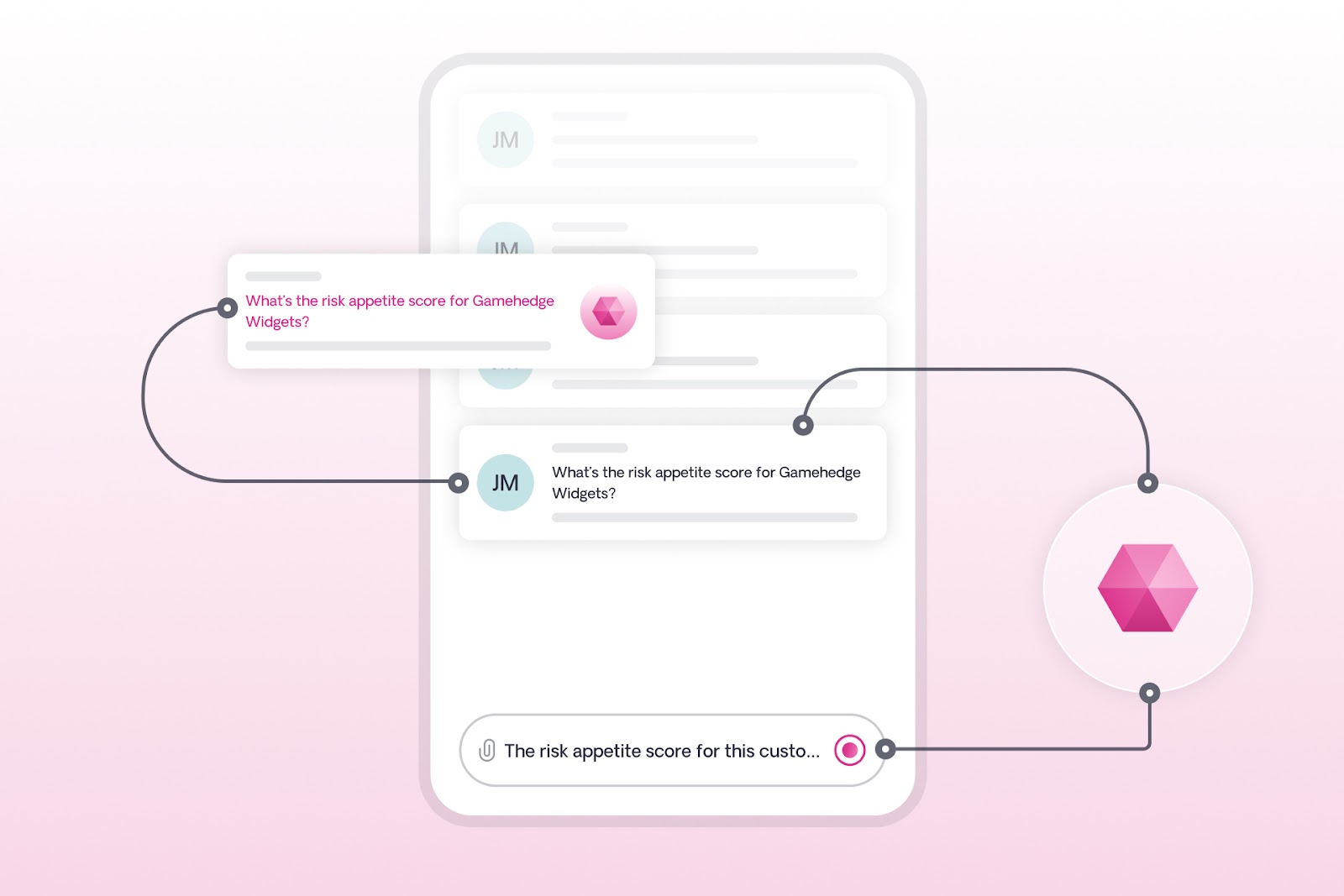

1. Let’s say a carrier has its own internal ChatGPT-like app as well as Sixfold's AI risk assessment solution.

2. With MCP, an underwriter using an internal chat tool, for example, can simply ask a question “What’s the risk appetite score for this customer named Gamehendge Widgets?”

3. Their AI doesn’t need to know everything itself, it just knows who to ask, in this case - Sixfold. It reaches out to Sixfold’s models, gets the answer, and serves it up directly to the underwriter.

No complex integration projects. No heavy lift for IT. MCP would act as a seamless bridge between Sixfold’s risk assessment expertise and the additional AI tools underwriters are using.

Why Sixfold Cares (a Lot)

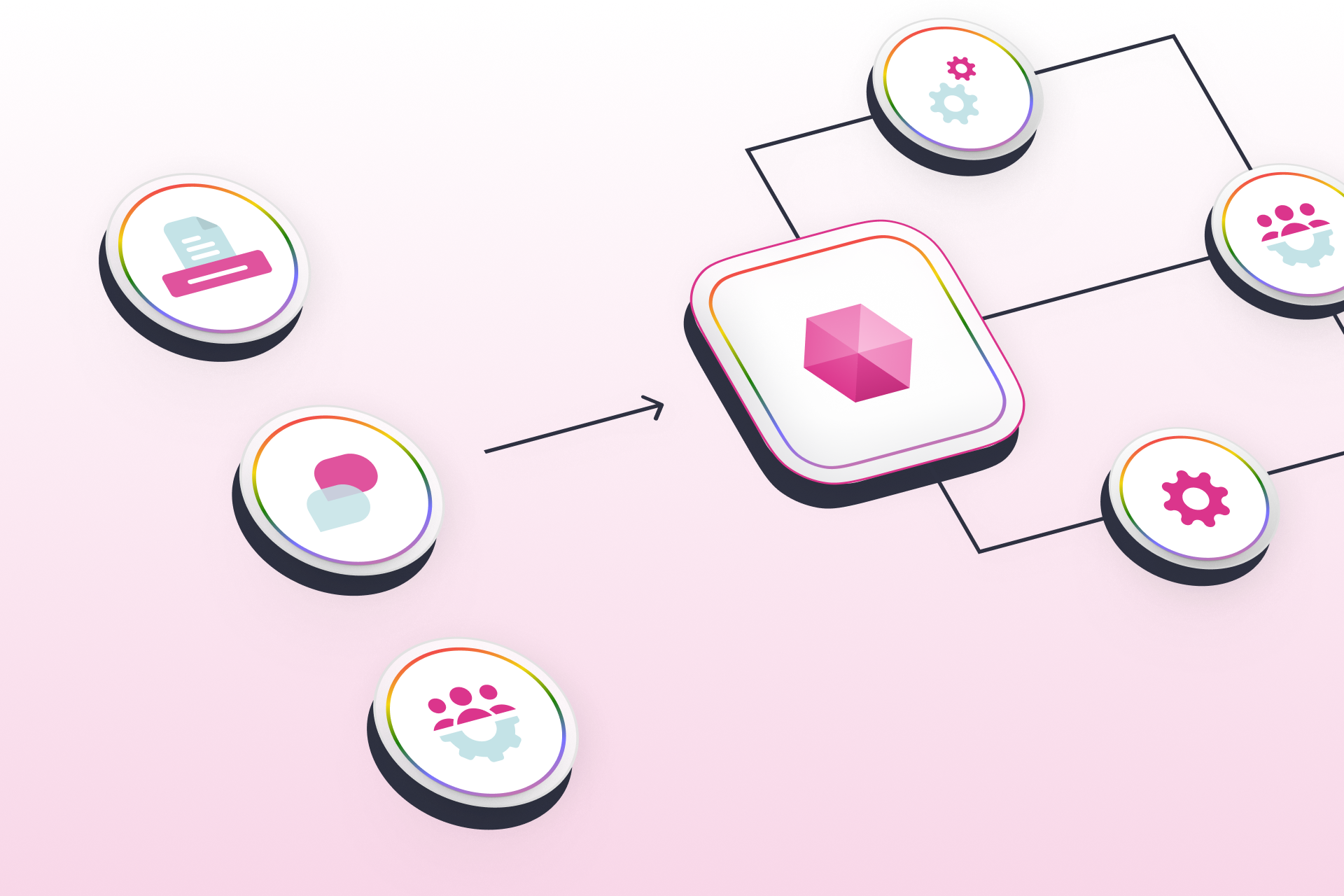

Right now, almost everyone is talking about "agentic" behavior, how AI agents plan and reason independently. But the thing is that MCP is the quieter, more practical sibling: it’s about getting the right data in the right place, way faster than before.

The impact MCP can bring:

- It can meet underwriters where they are today - inside the tools they already use every day

- Enables flexible adoption where carriers can pull in just the capabilities they want, without a massive rollout

- It’s a leap forward in making underwriting AI more accessible and useful

At Sixfold, we’ve made MCP connections between our models and other systems, We’ve, and also exposed internal tools where AI chat assistants can query Sixfold’s underwriting insights.

What to Watch Out For

Like any new tech, MCP isn’t perfect. There are a few important risks to keep in mind:

Security:

- MCP is pretty quiet on the security mechanisms with which connected systems lock down their data. Existing enterprise-grade methods that companies like Sixfold use to protect sensitive customer data will need to be considered and implemented.

- Malicious tools could hide bad instructions if care is not taken on how different models talk to each other.

Accuracy:

- Incorrect or messy data leads to bad decisions. AI pulling data quickly doesn’t mean it’s always right, double-checking and validation are key.

What’s Next with MCP?

MCP done right (e.g. secure, validated and tested over and over) could be a kind of quiet but transformative technology that will make AI in insurance not just smarter, but more practical.

From many steps to one: MCP collapses complex processes into simple interactions.

From heavy integrations to light connections: Carriers can plug into any AI expertise easily.

From standalone tools to connected ecosystems: Underwriters get the best of all worlds, without even noticing the heavy lifting happening in the background.

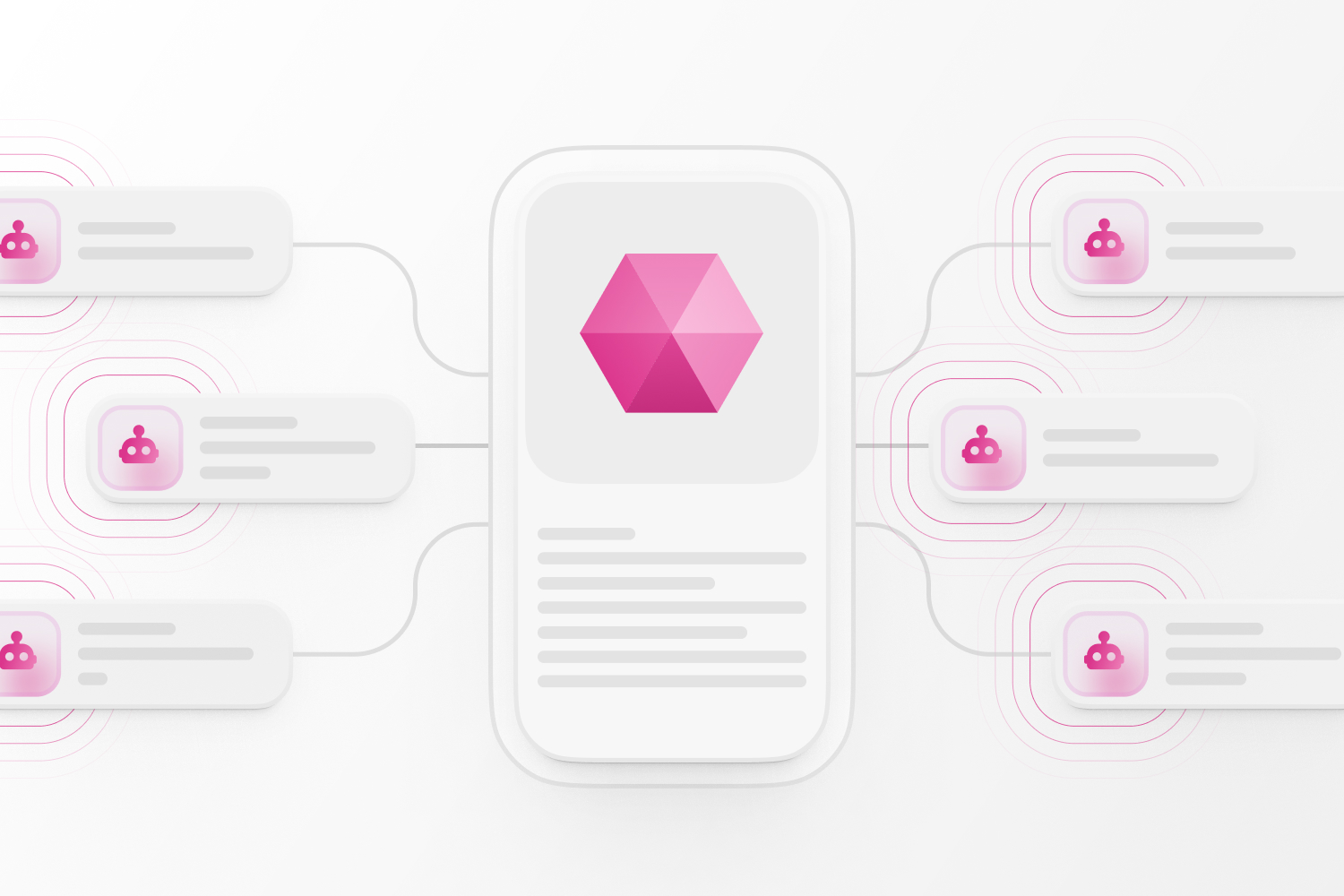

Emerging protocol: Agent-to-Agent (A2A). A2A was launched by Google in April 2025 and has already gained support from Microsoft. The protocol addresses the need for AI agents, often developed on disparate platforms, to communicate and collaborate. Even with certain similarities, MCP and A2A work well together instead of competing. Google says that A2A is meant to go alongside Anthropic’s MCP.

As more organizations roll out MCP and A2A, AI assistants and agents are getting way better, more up-to-date, more capable, and way more useful in the flow of work.

The future of AI in underwriting isn’t just about smarter models, it’s about models that collaborate and talk to each other.

Sixfold’s Take on AI Agents

Not sure what an AI agent is? You’re not the only one. We chatted with Sixfold CTO Brian Moseley to explain what agentic AI actually is, why it’s suddenly everywhere, why it matters for underwriting, and how we’re using it at Sixfold.

Not sure what an AI agent is? You’re in good company. We sat down with Sixfold CTO Brian Moseley to unpack:

- What “agentic AI” really means

- Why it’s suddenly everywhere

- Why it matters for insurance underwriting

- How we’re putting this technology to work at Sixfold

The Definition Dilemma

What exactly are agents in the context of AI?

That’s harder to answer than it should be.

The industry hasn’t coalesced around a standard definition. According to swyx, a software engineer might say that an agent is “LLM calls in a for loop.” Pretty reductive. If you buy them a beer, they’ll probably start talking about tools, memory, and LLM-based control flow - fascinating but deeply technical concepts that don’t offer much pragmatic insight.

Basically, an agent can accomplish a complex task by choosing how to progress through a complex, evolving process without ongoing human input.

For my money, Addy Osmani offers the most useful working definition. He says that “AI agents [...] are autonomous systems designed to perceive their environment, make decisions, and take actions to achieve specific goals, all while maintaining context and adapting their approach based on results.” Basically, an agent can accomplish a complex task by choosing how to progress through a complex, evolving process without ongoing human input. Contrast this with the typical chatbot interaction, where a human submits a sequence of one-shot requests.

How do you define the difference between a workflow and an agent?

The key difference is that a workflow is consistent and predictable. Given the same set of inputs, it will proceed the same way every time. A workflow can be dynamic, with conditional or looping steps, but it is inherently deterministic.

An agent’s process is driven by probabilities. Even if given the same inputs twice in a row, an agent may choose a different plan of attack each time and will almost certainly generate different outputs on every run.

An agent’s process is driven by probabilities. Even if given the same inputs twice in a row, an agent may choose a different plan of attack each time and will almost certainly generate different outputs on every run. You can reason about what is likely to happen, but there are no guarantees. You have to be comfortable with a range of outputs and have some tolerance for error.

The flexibility is exactly what makes agents so powerful, and why they’re now at the center of so many AI conversations.

Why Everyone’s Talking About Them

Why do you think agents have become such a hot topic?

Businesses are always looking for ways to do more with their resources. First, we automate repetitive tasks that are consistent and predictable from one execution to another. But once those possibilities have been exhausted, the next leap is enabling software to handle more complex, reasoning-based work.

If this is successful, we can offload even more, not just the rote work that is often done on autopilot, but also the tasks that require real thinking.

If this is successful, we can offload even more, not just the rote work that is often done on autopilot, but also the tasks that require real thinking. That’s the promise.

But it gets even better. We can envision a world where, as the cost of intelligence is driven down, every worker becomes a manager of a team of agents, setting goals, reviewing work, testing many ideas simultaneously, and picking the best with high confidence. This goes beyond improving productivity — it’s evolution.

Where Agents Fit Into Underwriting

Where do you see agents making an impact in underwriting?

The underwriting process itself is relatively structured and rules-based. But within that structure, underwriters deal in nuance, like recognizing when a 10-story building without sprinklers is still acceptable due to fire-resistive construction and strong safety measures, or approving a contractor engaged in high-risk work because of rigorous safety protocols and certified staff. They have to understand a risk so completely that they can craft a very thoughtful quote and risk management solution for the customer.

The underwriting workflow itself might remain orchestrated traditionally, but each step, the "needle-in-the-haystack" moments, could be powered by intelligent agents making context-based decisions.

That’s where agents come in. The underwriting workflow itself might remain orchestrated traditionally, but each step, the "needle-in-the-haystack" moments, could be powered by intelligent agents making context-based decisions. And instead of one underwriter doing all the work, an entire network of specialized agents can be turned loose on the risk to each solve a specific, context-rich problem, then synthesize the findings.

Agents at Sixfold

How are we using agents across Sixfold today?

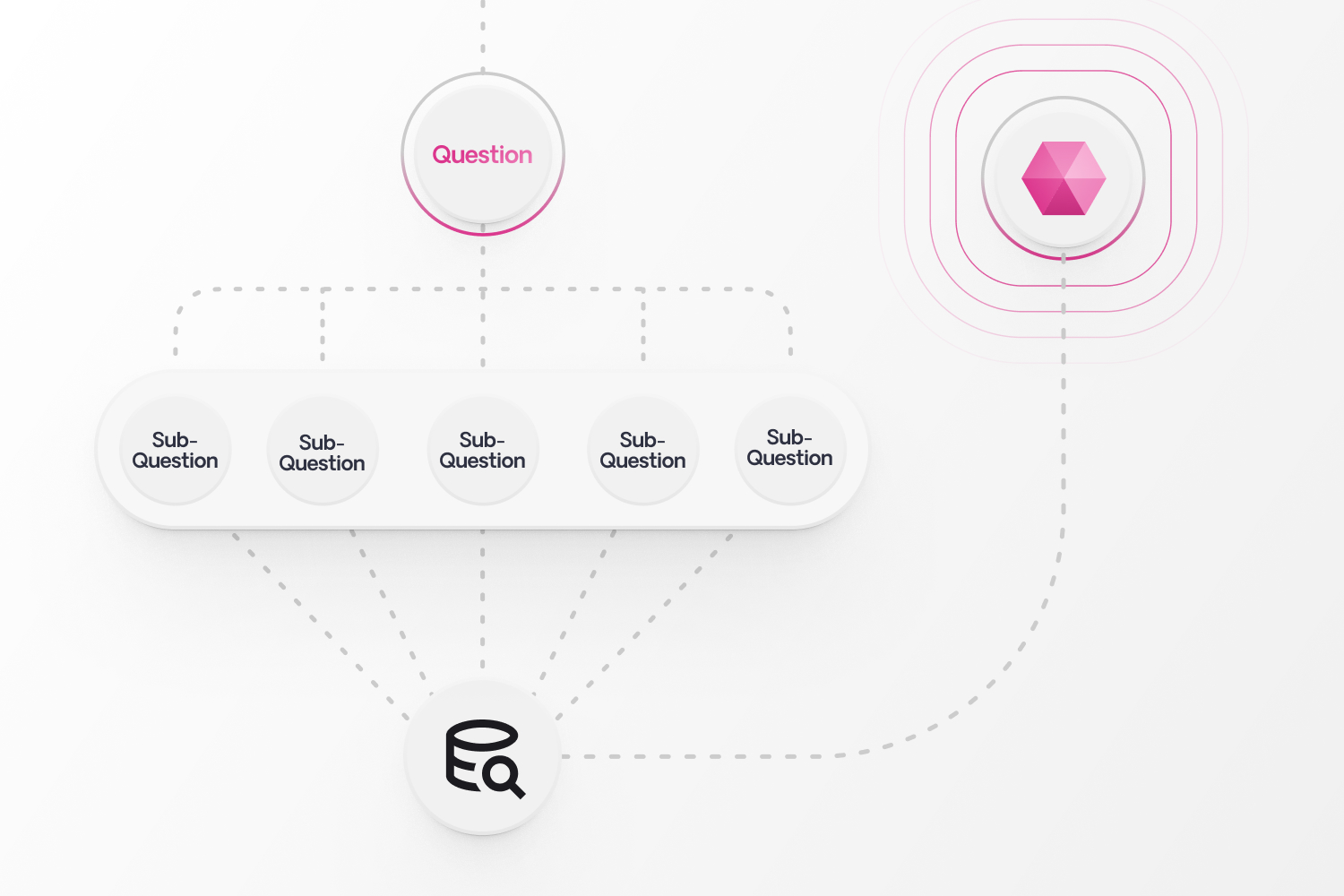

The most obvious starting point is our Q&A feature. It starts with pre-set underwriting questions, scanning applicant data to find the answers, and summarizing them for the underwriter. While the feature began life as a simple one-shot LLM interaction, it has evolved over time. Now, if the input is complex, the system breaks it down into simpler sub-questions, answers each separately, and then summarizes those outputs into a final response.

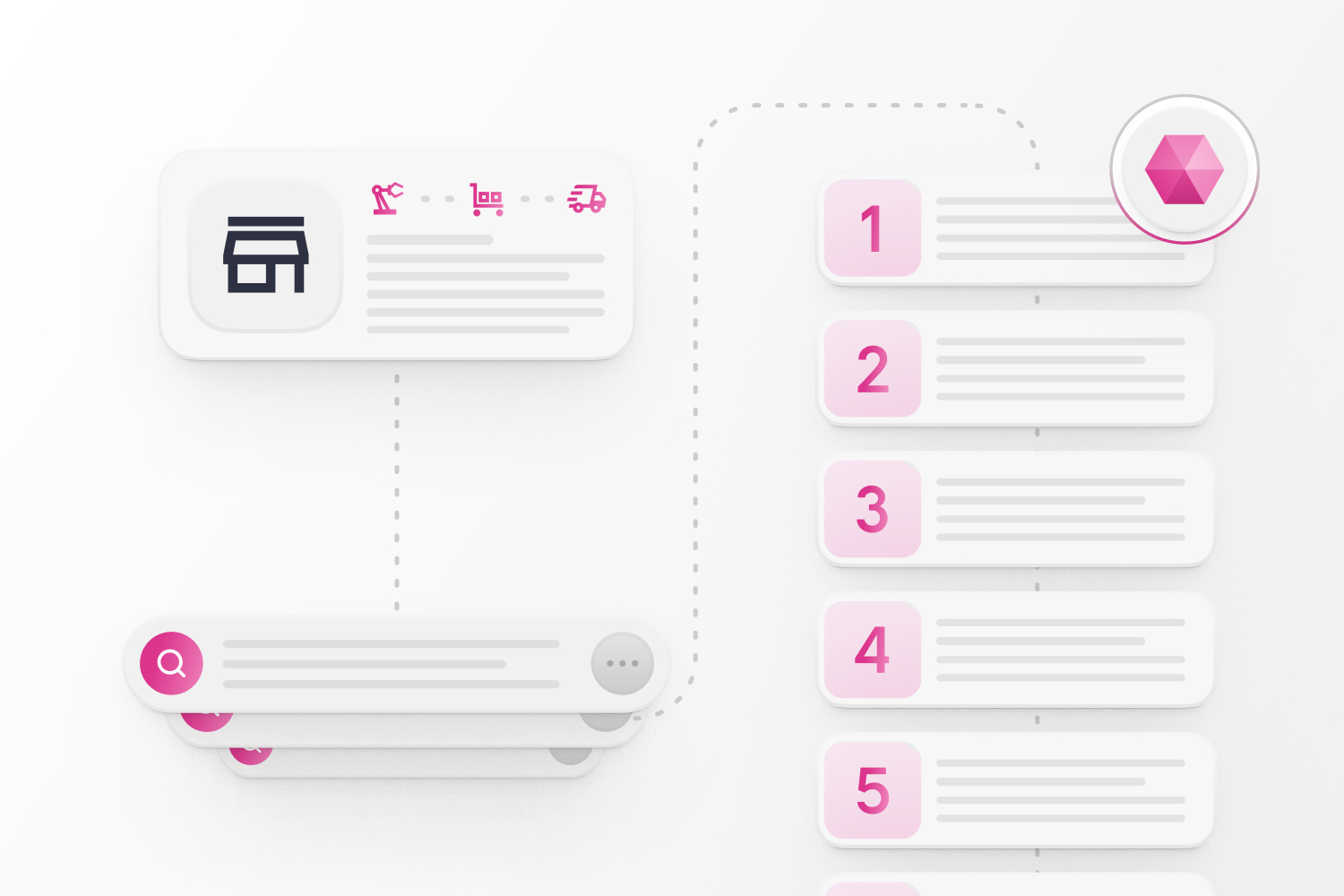

Next up is our Research Assistant, an agent that performs targeted web searches depending on the context of the business. For a given question, it decides how to search, what sources to consult, and how to rank results. We anticipate the research assistant will boost our ability to answer 30% more critical questions, giving underwriters a more complete understanding of risk before quoting.

We’re researching ever-more advanced techniques for document extraction. We’re experimenting with agentic approaches to classifying documents and routing to customized pipelines that can better extract information from complex structured documents like loss runs—use cases where traditional methods often fall short.

The Future of Agents

Are there limitations to agents today?

Technically, we’re still in the early days. As discussed earlier, we can’t even agree on a shared definition yet. And the methods by which agents interact with their environment (MCP) and each other (A2A) are evolving quickly.

But there are plenty of tools that help engineers build and deploy agents, singly or in swarms, and the landscape is rich and competitive. Amazing engineers like Simon Willison are doing yeoman’s work to help us understand the new models, tools, and techniques that pop up every day. It’s a great time to be a builder!

I think the bigger challenge is societal. What does the world look like when every person has a thousand agents at their fingertips? How will we experience the changes that advance us to that point? What will work - and leisure - look like?

Are there historical tech shifts you’d compare this to, like the internet or the iPhone?

Those were 10x changes, but what we’ve seen and will see in the five years after ChatGPT feels like 100x or even 1,000x. I don’t think anything else in modern history really compares.

There’s a lot of agentic hype right now, and some of it feels over the top, like the idea that AI will be writing most code in just a few months. You have to take some of this stuff with more than one grain of salt.

At the end of the day, it has to make sense for underwriters. We’re all about using the best tool for the job, regardless of what everybody happens to be talking about at the moment.

But overall, this feels different. Even if I’m naturally skeptical about the flavor of the day,I think there’s something real and lasting here, and we are leaning heavily into it at Sixfold. On top of what I mentioned earlier, we’re working through several new concepts that could make it into our roadmap.

That said, we’re not doing agents for the sake of it. At the end of the day, it has to make sense for underwriters. We’re all about using the best tool for the job, regardless of what everybody happens to be talking about at the moment.

References

latent.space/p/agent

docs.google.com/presentation/d/1SWoBIvTQu__uNEvSawmNcROiUx-n86O_fP0arZcTGb8/edit#slide=id.g2d9839ccb1c_0_0

addyo.substack.com/p/what-are-ai-agents-why-do-they-matter

huyenchip.com/2025/01/07/agents.html

The Role of AI and IDP in Underwriting’s Future

Underwriting faces a data challenge, with manual processes consuming up to 40% of underwriters’ time. While IDP tools digitize data, underwriting AI goes further—providing actionable insights and enabling smarter risk analysis to improve efficiency and accuracy.

Insurance underwriting has long struggled with a data challenge: finding a way to handle the daily flood of information quickly and accurately. Why? Because the ability to process data significantly impacts the profitability and growth of insurers.

If this sounds familiar, here’s some good news: there’s now a better way to tackle these challenges. Underwriting-focused AI is transforming underwriting by processing complex data, providing risk summaries, and delivering tailored risk recommendations—all in ways that were previously unimaginable.

Most of the information underwriters need to make an underwriting decision comes in a mix of structured, semi-structured, and unstructured formats.

Most of the information underwriters need to make an underwriting decision comes in a mix of structured, semi-structured, and unstructured formats, including various types of documents such as broker emails, application forms, and loss runs. This data is often handled manually, consuming 30% to 40% of underwriters' time and ultimately impacting Gross Written Premium (McKinsey & Company).

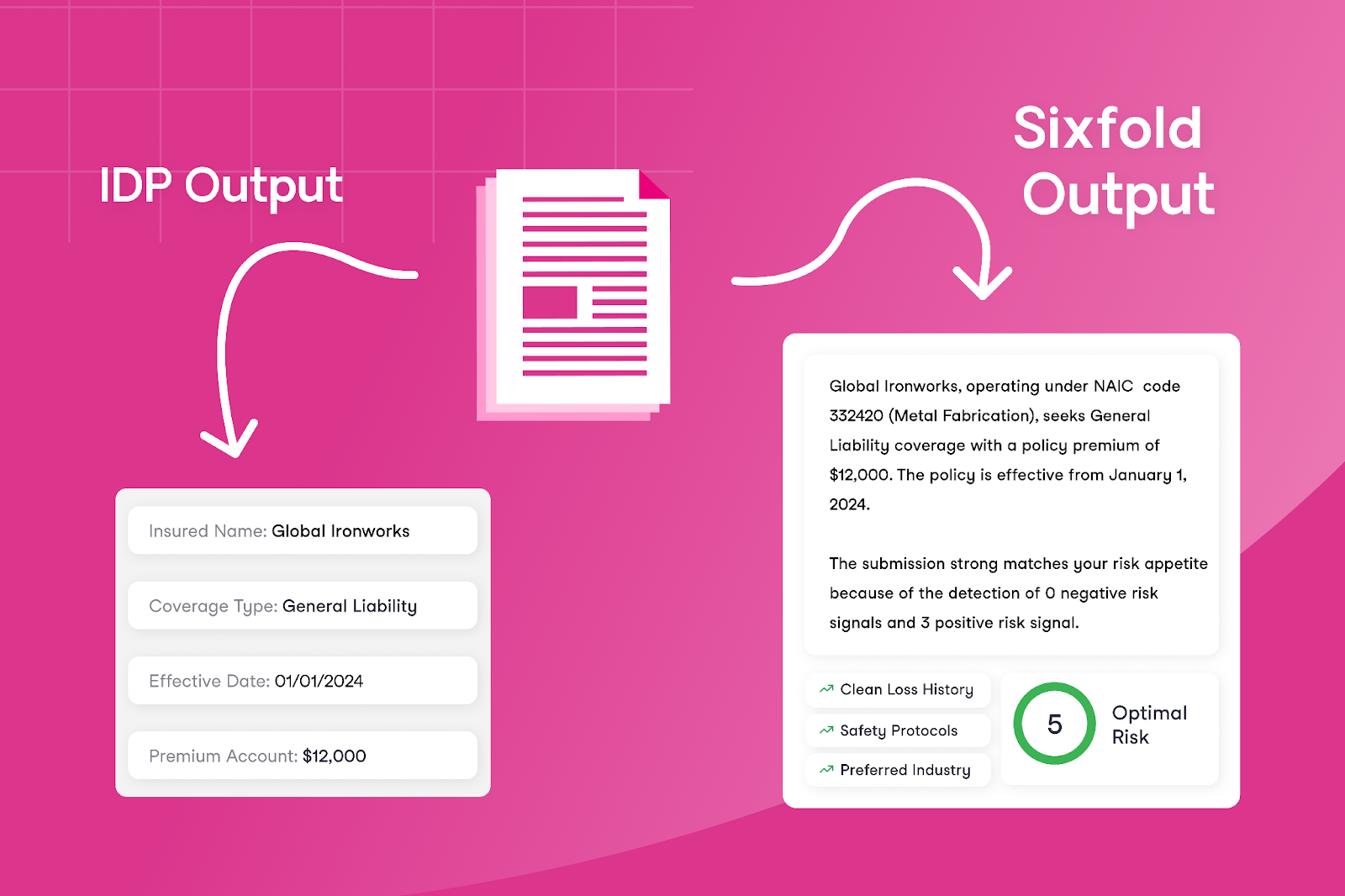

To address this, insurers have long sought technological solutions. Intelligent Document Processing (IDP) tools were a key step forward, using technologies like Optical Character Recognition (OCR) to extract and organize data. However, while IDP helps digitize information, it doesn’t fully solve the problem of turning data into actionable insights.

Comparing IDP and Underwriting AI

IDPs have been the predominant way of approaching underwriting efficiency, primarily used by insurers to automate tasks in underwriting, policy administration, and claims processing. These tools focus on converting generic documents into structured data by capturing text, classifying document types, and extracting key fields, offering a general solution across different industries.

Digitizing documents - sounds like a no-brainer right? But, what happens if you go beyond simply digitizing documents?

Digitizing documents - sounds like a no-brainer right? But, what happens if you go beyond simply digitizing documents? Underwriting AI makes this possible by offering a new approach to improving efficiency and accuracy for underwriters — by not just bringing in all the data but also generating risk analysis and actionable insights. AI empower underwriting teams to focus only on the information that truly matters for underwriting decisions.

The difference between these technologies becomes clearer when comparing the outputs of underwriting AI with traditional technologies like IDPs. Instead of only extracting every data field, risk assessment solutions that leverage LLMs—like Sixfold— use their trained understanding of what underwriters care about to decide what risk information to summarize and present to underwriters.

This approach differs from IDP by focusing on presenting contextual insights for underwriters, such as risk patterns and appetite alignment. Instead of only providing the extracted data, it highlights key information that directly supports faster decision-making.

Different Approaches to Accuracy

By now, we already know that IDPs and AI solutions aim to improve efficiency and save underwriters time. But what about accuracy? Accuracy is the key component of a successful underwriting decision, which is why evaluating it is so important for tools focused on supporting underwriters. Let’s highlight the differences between what matters for IDP versus AI tools in terms of accuracy.

IDPs - Accuracy is about precise field extraction

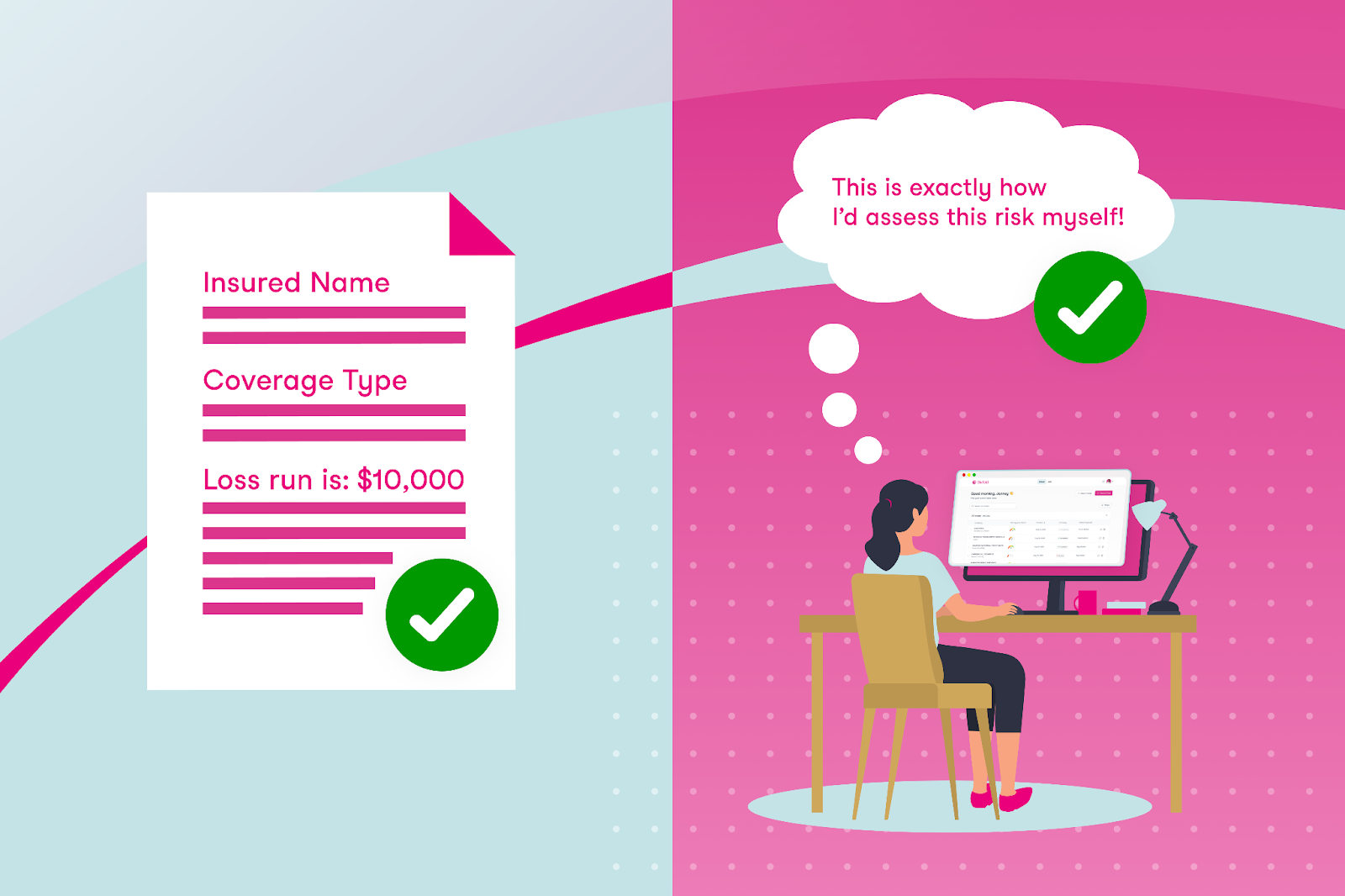

IDPs focus on data processing, so their success is measured by how accurately they extract each text field. This makes sense given their role in automating structured data collection. If the data field in a document says “Loss run is: $10,000” and the IDP extracts “$1,000” then it’s easy to say that it was not an accurate extraction.

Underwriting AI - Accuracy is about human-like reasoning

The accuracy of underwriting AI lies in their ability to align reasoning and output with the task, presenting the key risk data underwriters themselves would choose to prioritize. Evaluating AI accuracy therefore means determining how closely the AI mirrors an underwriter in assessing risk submissions.

The reality is that, with AI and data extraction, small mistakes don't matter much. For example, consider a loss run: as an underwriter, what’s important isn’t necessarily every individual line or small loss but rather recognizing patterns — such as total losses exceeding $10,000 within a specific timeframe or recurring trends in certain types of losses. An underwriting AI can uncover these insights by focusing on significant trends and aggregating relevant data, looking at a case the same way a human would do.

How to Choose the Right Tool?

The answer isn’t as simple as this or that. There are successful examples of insurers using one, the other, or even both solutions, depending on their needs.

IDP can be a great tool for extracting fields like names, addresses, and other key information from documents to feed into downstream systems. Meanwhile, AI-focused technologies like Sixfold are great for risk analysis. It’s important to note that a company doesn’t need to have an IDP solution in place to adopt an AI solution like Sixfold – these technologies can work independently.

If your goal is to reduce the manual workload for underwriters, start by identifying the exact inefficiencies.

To decide which use of technology is the best fit for your underwriting needs – whether it’s one of these solutions or potentially both – consider the specific challenges you’re addressing. If your goal is to reduce the manual workload for underwriters, start by identifying the exact inefficiencies. For instance, if the issue is that underwriters spend a significant amount of time manually extracting text fields from one document and re-entering it into another system, IDP could be a good option — it’s built for that kind of work, what you are paying for is extracting fields.

However, if you want to address larger inefficiencies in the underwriting process — such as reducing the total time it takes for underwriters to respond to customers — you’ll need to tackle bigger bottlenecks. While it’s true that manual data entry is a challenge, much more of underwriters’ time is spent on manual research tasks such as reviewing hundreds of pages looking for specific risk data, or finding the right NAICS code for a business. These are areas where underwriting AI would be better suited.

So, What's Next for Underwriting?

IDP can efficiently digitize key information, but it doesn’t solve the broader challenges of manual inefficiencies that underwriters deal with daily.

Imagine a future in underwriting where IDP effortlessly extracts core data fields essential for accurate rating, ensuring consistent and reliable input into downstream systems. Meanwhile, specialized underwriting AI takes on the rest of the heavy manual lifting—matching risks to appetite, delivering critical context for decisions, and significantly reducing the time it takes to quote.

By combining the OCR capabilities of IDP with the intelligence of underwriting AI, insurers can make underwriting a lot less manual.

By combining the OCR capabilities of IDP with the intelligence of underwriting AI, insurers can make underwriting a lot less manual. These tools promise a 2025 where underwriters spend less time on repetitive tasks and more time making smart risk decisions.

This post was originally posted on LinkedIn

Just Launched: Beyond the Policy 🚀

I’m super excited to introduce Beyond the Policy, Sixfold’s innovation hub designed exclusively for underwriters.

Here at Sixfold, we talk to underwriters all the time—on calls, in meetings, at conferences, and through feedback on everything we create. Why? Because understanding the real challenges underwriters face is at the core of what we do.

From those conversations, we realized there’s no central digital space where underwriters can stay updated on industry innovations, exchange insights, and find tools to grow their careers.

That got us thinking — what if we built that place?

So, we did! I’m super excited to introduce Beyond the Policy, Sixfold’s innovation hub designed exclusively for underwriters. Here’s what you can expect:

Real Stories From the Frontlines

Why? Great advice comes from those who’ve faced the same challenges. That’s why Beyond the Policy features interviews with experienced underwriters who share their perspectives on the challenges and opportunities shaping the industry today. These Q&As provide practical insights, lessons learned, and tips you can apply directly to your own work.

We began our interviews with underwriters from major players like Sompo International and NSM, as well as from smaller MGAs and consultancies, ensuring a diverse (and always personal) perspectives.

AI Crash Course for Underwriting Leaders

Why? AI is transforming underwriting, but getting started can feel overwhelming. To help, we’ve created a crash course specifically for underwriting leaders. It’s designed to provide clear, actionable steps for getting started.

This course brings together insights from top AI experts across diverse fields, including the Head of AI at Sixfold and a Lead AI Counsel at Debevoise & Plimpton. Whether you're new to AI or looking to enhance your approach, this resource is a great starting point.

Will we launch more courses in the future? Absolutely - stay tuned for our next one.

The Best of the Web for Underwriters

Why? The internet is full of information, but finding what’s truly relevant can be a challenge. That’s why we’re doing the work for you. Beyond the Policy features curated content tailored to underwriters, pulling together key industry updates and trends - and updating it frequently. Fresh content, every week!

This week's recommended content includes a great episode from The Insurance Podcast featuring Send (underwriting insurance software) and an article from Deloitte on the insurance outlook in 2025.

Monthly Emails You’ll Actually Want to Read

Why? We know you’re busy, so we’ve packed our monthly emails with the best of what Beyond the Policy has to offer. Expect updates on the latest Q&As, new resources like the AI crash course, and handpicked articles that are worth your time.

These emails are designed to keep you ahead (without adding to your email load).

➡️ Beyond the Policy is now live!

If you’re an underwriter, I invite you to check it out, sign up for our monthly emails and hopefully learn a new thing or two.

Enjoy!

.png)

AXIS Adopts Sixfold’s Purpose-Built AI Solution

Sixfold, the AI solution designed to streamline end-to-end risk assessments for underwriters, announced its partnership with AXIS, a global leader in specialty insurance and reinsurance, a collaboration that has yielded positive results in its initial roll out.

October 18, 2024 - Sixfold, the AI solution designed to streamline end-to-end risk assessments for underwriters, announced its partnership with AXIS, a global leader in specialty insurance and reinsurance, a collaboration that has yielded positive results in its initial rollout. Within the first month of deployment, AXIS underwriters leveraged Sixfold’s solution to improve efficiency, accurately classifying businesses and aligning cases with their risk appetite.

“This partnership is all about leveraging AI to empower our underwriters and even further enhance the service we provide to our customers. We were searching for a solution that could reliably deliver precision, and Sixfold has done just that and more.

The real game-changer has been the time savings—freeing up valuable hours so our underwriters can zero in on the work that drives results while ultimately benefiting the customer” said Josh Fishkind, Head of Innovation at AXIS.

“Our goal is to provide meaningful ROI for all our customers, and AXIS has already begun to see these benefits,” said Alex Schmelkin, Sixfold's Founder & CEO. “We look forward to continuing our partnership as AXIS discovers more ways Sixfold can enhance their underwriting processes.”

Read the full customer story here and check out the Insurance Post article covering our work with AXIS.

%20(1200%20x%20644%20px)%20(3).png)

Sixfold Partners with CyberCube

The partnership with CyberCube aligns with the strategy of utilizing the best data sources to streamline underwriting, keeping insurers ahead as cyber insurance premiums are projected to reach $20 billion by 2025.

In 2025, the cyber risk landscape is expected to become more complex with increasing threats driven by rising privacy violations, data breaches, the rise of AI, and external factors such as emerging regulations. According to Munich Re, the cyber insurance market has nearly tripled in size over the past five years, with global premiums projected to surpass $20 billion by 2025, up from nearly $15 billion in 2023, as reported by CyberSecurity Dive.

Reflecting the rapid market growth and emerging threats, Sixfold has seen increased demand from specialty insurers in the cyber sector and has successfully brought on several industry leaders as customers. "In the near future, cyber policies will become as essential as General Liability or Property & Casualty coverage. Given the world we live in, this shift is inevitable. Cyber policies are poised to become the most specific and highly customized policies available" said Jane Tran, Co-founder & COO at Sixfold.

"In the near future, cyber policies will become as essential as General Liability or Property & Casualty coverage. Given the world we live in, this shift is inevitable. Cyber policies are poised to become the most specific and highly customized policies available"

Empowering Underwriters to Quickly Adapt to New Cyber Risks

As cyber risks grow, the pressure on underwriters to assess risks accurately and expedite the case review process continues to increase. Sixfold’s AI solution for cyber insurance addresses these challenges by securely ingesting each insurer’s underwriting guidelines and aggregating all necessary business information to quickly provide recommendations that align with the carrier’s risk appetite. This capability allows insurers to quickly adjust their risk strategies in response to new cyber threats.

“With Sixfold, insurers can synchronize their underwriting guidelines across the board and adapt quickly. For example, when a new malware threat is identified, you can instantly incorporate it into your risk criteria through Sixfold. This ensures that the entire cyber team factors it into their assessments immediately without needing to learn every detail or the threat or spending hours digging for the right information” said Alex Schmelkin, Founder & CEO of Sixfold.

Besides, effective cyber underwriting demands deep expertise in IT systems, cybersecurity measures, and industry developments. This need for specific expertise presents a significant talent issue for insurers, especially with 50% of the underwriting workforce set to retire by 2028. Sixfold bridges the knowledge gap by instantly providing underwriters with the specialized knowledge they need for accurate risk assessments.

“Underwriters no longer need to be cyber experts; they can rely on Sixfold to spotlight the critical information needed for accurate underwriting decisions. Our platform simplifies the complex world of cyber risk and empowers underwriters to make more confident decisions, faster” said Jane Tran, Co-founder & COO at Sixfold.

Sixfold Partners with CyberCube to Enhance Cyber Risk Assessments

Sixfold has teamed up with CyberCube, the world’s leading analytics provider to quantify cyber risk. This integration of CyberCube's advanced cyber risk analytics with Sixfold's AI underwriting solution enables insurers to achieve faster and more accurate risk assessments. The partnership enhances underwriting efficiency, strengthens regulatory compliance, and offers highly tailored cyber insurance solutions, empowering insurers to stay ahead of the rapidly evolving cyber threat landscape. "The partnership between CyberCube’s comprehensive cyber data and Sixfold’s innovative risk assessment is setting a new standard for the future of underwriting, keeping insurers prepared for new challenges in determining accurate cyber policies.” said Ross Wirth, Head of Partnership and Ecosystem for CyberCube.

"The partnership between CyberCube’s comprehensive cyber data and Sixfold’s innovative risk assessment is setting a new standard for the future of underwriting, keeping insurers prepared for new challenges in determining accurate cyber policies.”

To see our Sixfold speeds up the cyber underwriting process join our upcoming live product demo.